|

Back to Blog

Define point measure in statistics7/18/2023

Types of point estimation Bayesian point estimation īayesian inference is typically based on the posterior distribution. A statistic T(X) is said to be sufficient for θ(or for the family of distribution) if the conditional distribution of X given T is free from θ.

We define sufficient statistics as follows: Let X =( X 1, X 2. Therefore, the statistician would like to condense the data by computing some statistics and to base his analysis on these statistics so that there is no loss of relevant information in doing so, that is the statistician would like to choose those statistics which exhaust all information about the parameter, which is contained in the sample. But in many cases the raw data, which are too numerous and too costly to store, are not suitable for this purpose. In statistics, the job of a statistician is to interpret the data that they have collected and to draw statistically valid conclusion about the population under investigation. For example, in a normal distribution, the mean is considered more efficient than the median, but the same does not apply in asymmetrical, or skewed, distributions. Generally, we must consider the distribution of the population when determining the efficiency of estimators. We extend the notion of efficiency by saying that estimator T 2 is more efficient than estimator T 1 (for the same parameter of interest), if the MSE( mean square error) of T 2 is smaller than the MSE of T 1. Therefore, if the estimator has smallest variance among sample to sample, it is both most efficient and unbiased. We can also say that the most efficient estimators are the ones with the least variability of outcomes. The estimator T 2 would be called more efficient than estimator T 1 if Var( T 2) < Var( T 1), irrespective of the value of θ. Let T 1 and T 2 be two unbiased estimators for the same parameter θ. An unbiased estimator is consistent if the limit of the variance of estimator T equals zero. If a point estimator is consistent, its expected value and variance should be close to the true value of the parameter. The larger the sample size, the more accurate the estimate is.

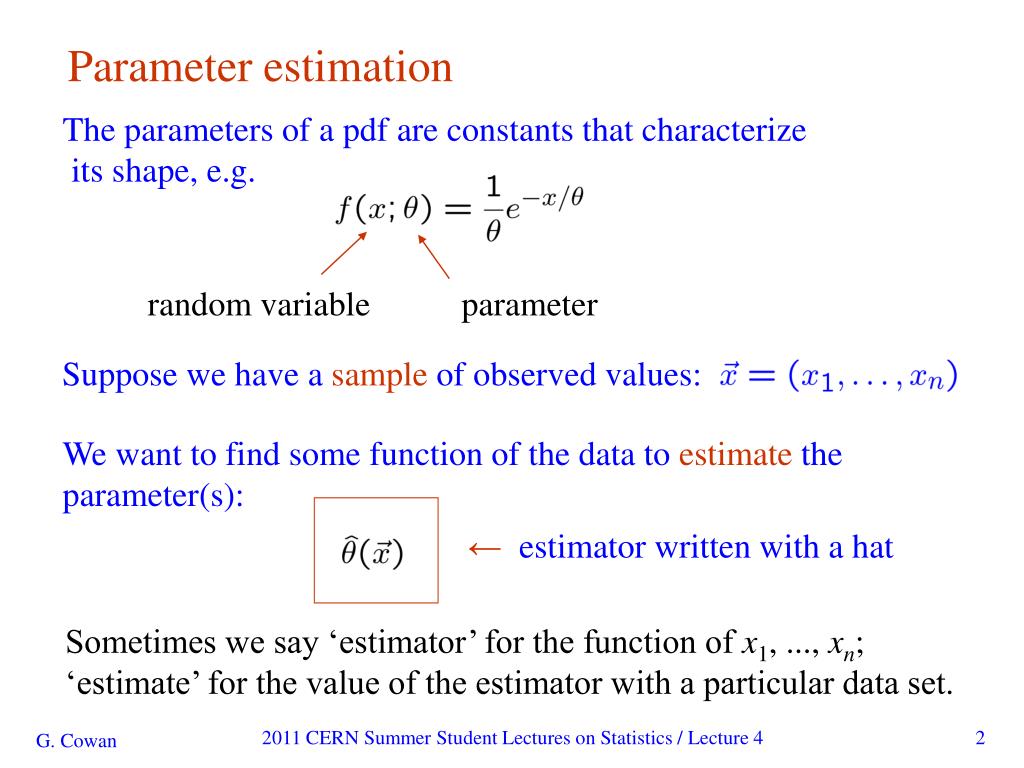

The difference E − θ is called the bias of T if this difference is nonzero, then T is called biased.Ĭonsistency is about whether the point estimate stays close to the value when the parameter increases its size. For example, from the same random sample we have E( x̄ ) = µ(mean) and E(s 2) = σ 2 (variance), then x̄ and s 2 would be unbiased estimators for µ and σ 2. , X n, the estimator T is called an unbiased estimator for the parameter θ if E = θ, irrespective of the value of θ. , X n) be an estimator based on a random sample X 1,X 2. Most importantly, we prefer point estimators that has the smallest mean square errors. However, A biased estimator with a small variance may be more useful than an unbiased estimator with a large variance. The estimator will become a best unbiased estimator if it has minimum variance. When the estimated number and the true value is equal, the estimator is considered unbiased. It can also be described that the closer the expected value of a parameter is to the measured parameter, the lesser the bias. “ Bias” is defined as the difference between the expected value of the estimator and the true value of the population parameter being estimated. Examples are given by confidence distributions, randomized estimators, and Bayesian posteriors. A point estimator can also be contrasted with a distribution estimator. Examples are given by confidence sets or credible sets. More generally, a point estimator can be contrasted with a set estimator. Point estimation can be contrasted with interval estimation: such interval estimates are typically either confidence intervals, in the case of frequentist inference, or credible intervals, in the case of Bayesian inference. More formally, it is the application of a point estimator to the data to obtain a point estimate. In statistics, point estimation involves the use of sample data to calculate a single value (known as a point estimate since it identifies a point in some parameter space) which is to serve as a "best guess" or "best estimate" of an unknown population parameter (for example, the population mean). Parameter estimation via sample statistics

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed